Can a lobster feel pain in the same way as you or I?

We know that they have the same sensors – called nociceptors – that cause us to flinch or cry when we are hurt. And they certainly behave like they are sensing something unpleasant. When a chef places them in boiling water, for instance, they twitch their tails as if they are in agony.

But are they actually “aware” of the sensation? Or is that response merely a reflex?

When you or I perform an action, our minds are filled with a complex conscious experience. We can’t just assume that this is also true for other animals, however – particularly ones with such different brains from our own. It’s perfectly feasible – some scientists would even argue that it’s likely – that a creature like a lobster lacks any kind of internal experience, compared to the rich world inside our head.

“With a dog, who behaves quite a lot like us, who is in a body which is not too different from ours, and who has a brain that is not too different from ours, it’s much more plausible that it sees things and hears things very much like we do, than to say that it is completely ‘dark inside’, so to speak,” says Giulio Tononi, a neuroscientist at the University of Wisconsin-Madison. “But when it comes down to a lobster, all bets are off.”

The question of whether other brains – quite alien to our own – are capable of awareness, is just one of the many conundrums that arise when scientists start thinking about consciousness. When does an awareness of our own being first emerge in the brain? Why does it feel the way it does? And will computers ever be able to achieve the same internal life?

Tononi may have a solution to these puzzles. His "integrated information theory" is one of the most exciting theories of consciousness to have emerged over the last few years, and although it is not yet proven, it provides some testable hypotheses that may soon give a definitive answer.

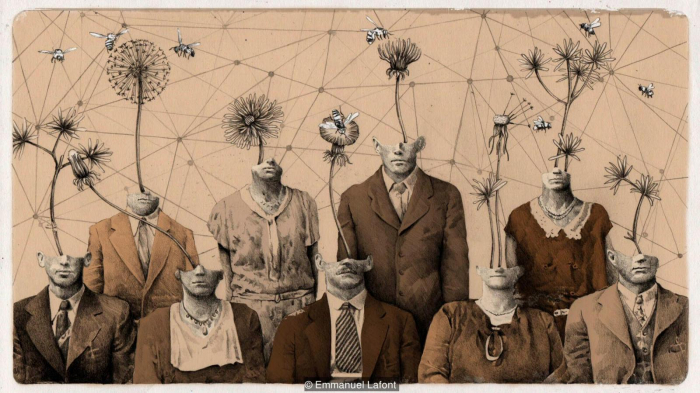

We currently have no way of knowing whether lobsters have a conscious inner life - or if their behaviour is purely reflex (Credit: Emmanuel Lafont)

Tononi says his fascination arose as a teenager with a “typically adolescent” preoccupation with ethics and philosophy. “I realised that knowing what consciousness is and how it came about is crucial to understanding our place in the universe and what we do with our lives,” he says.

At that age, he did not know the best path to follow to pursue those questions – Would it be mathematics? Or philosophy? – but he eventually settled on medicine. And the clinical experience helped to fertilise his young mind. “There is really something special about having a direct exposure to neurological cases and psychotic cases,” he says. “It really forces you to face directly what happens to patients when they lose consciousness or lose the components of consciousness in ways that are really difficult to imagine if you didn’t see that it actually happens.”

In his published research, however, he built his reputation with some pioneering work on sleep – a less controversial field. “At that time you couldn’t even talk about consciousness,” he says. But he kept on mulling over the question, and in 2004, he published his first description of his theory, which he has subsequently expanded and developed.

It begins with a set of axioms that define what consciousness actually is. Tononi proposes that any conscious experience needs to be structured, for instance – if you look at the space around you, you can distinguish the position of objects relative to each other. It’s also specific and "differentiated" – each experience will be different depending on the particular circumstances, meaning there are a huge number of possible experiences. And it is integrated. If you look at a red book on a table, its shape and colour and location – although initially processed separately in the brain – are all held together at once in a single conscious experience. We even combine information from many different senses – what Virginia Woolf described as the “incessant shower of innumerable atoms” – into a single sense of the here and now.

From these axioms, Tononi proposes that we can identify a person’s (or an animal’s, or even a computer’s) consciousness from the level of “information integration” that is possible in the brain (or CPU). According to his theory, the more information that is shared and processed between many different components to contribute to that single experience, then the higher the level of consciousness.

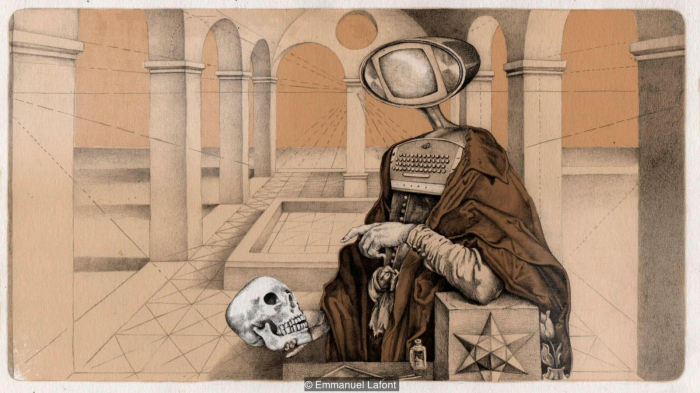

Giulio Tononi's theory asserts that consciousness arises from certain kinds of information processing (Credit: Emmanuel Lafont)

Perhaps the best way to understand what this means in practice is to compare the brain’s visual system to a digital camera. A camera captures the light hitting each pixel of the image sensor – which is clearly a huge amount of total information. But the pixels are not “talking” to each other or sharing information: each one is independently recording a tiny part of the scene. And without that integration, it can’t have a rich conscious experience.

Like the digital camera, the human retina contains many sensors that initially capture small elements of the scene. But that data is then shared and processed across many different brain regions. Some areas will be working on the colours, adapting the raw data to make sense of the light levels so that we can still recognise colours even in very different conditions. Others examine the contours, which might involve guessing the parts of an object are obscured – if a coffee cup is in front of part of the book, for instance – so you still get a sense of the overall shape. Those regions will then share that information, passing it further up the hierarchy to combine the different elements – and out pops the conscious experience of all that is in front of us.

The same goes for our memories. Unlike a digital camera’s library of photos, we don’t store each experience separately. They are combined and cross-linked to form a meaningful narrative. Every time we experience something new, it is integrated with that previous information. It is the reason that the taste of a single madeleine can trigger a memory from our distant childhood – and it is all part of our conscious experience.

At least, that’s the theory – and it’s compatible with many observations and experiments across medicine.

One study, published in 2015, examined the brains of participants under various forms of anaesthesia – including propofol and xenon. To get an idea of the brain’s capacity to integrate information, the team applied a magnetic field above the scalp to stimulate a small area of the cortex underneath – a standard non-invasive technique known as Transcranial Magnetic Stimulation (TMS). When awake, you would observe a complex ripple of activity as the brain responds to the TMS, with many different regions responding, which Tononi takes to be a sign of information integration between the different groups of neurons.

But the brains of the people under propofol and xenon did not show that response – the brainwaves generated were much simpler in form compared to the hubbub of activity in the awake brain. By altering the levels of important neurotransmitters, the drugs appeared to have “broken down” the brain’s information integration – and this corresponded to the participants’ complete lack of awareness during the experiment. Their inner experience had faded to black.

Drug-induced fantasies

As a further comparison, the team also looked at participants under ketamine. Although the drug renders you unresponsive to the outside world – meaning that it is also used as an anaesthetic – the patients frequently report wild dreams, as opposed to the pure “blank” experienced under propofol or xenon. Sure enough, Tononi’s team found that the responses to the TMS were far more complex than those under the other anaesthetics, reflecting their altered state of consciousness. They were disconnected from the outside world, but their minds were still very much turned on during their drug-induced fantasies.

Consciousness remains one of science's greatest mysteries (Credit: Emmanuel Lafont)

Tononi has found similar results when examining different sleep stages. During non-REM sleep – in which dreams are rarer – the responses to TMS were less complex; but during REM sleep, which frequently coincides with dream consciousness, the information integration appeared to be higher.

He emphasises that this isn’t “proof” that his theory is correct, but it shows that he could be working on the right lines. “Let’s say that if we had obtained the opposite result, we would have been in trouble.”

Some people lack a cerebellum - containing half the neurons in the whole brain - yet they are still capable of conscious perception

Tononi’s theory also chimes with the experiences of people with various forms of brain damage. The cerebellum, for instance, is the walnut-shaped, pinkish-grey mass at the base of the brain and its prime responsibility is coordinating our movements. It contains four times as many neurons as the cortex, the bark-like outer layer of the brain – around half the total number of neurons in the whole brain. Yet some people lack a cerebellum (either because they were born without it, or they lost it through brain damage) and they are still capable of conscious perception, leading a relatively long and “normal” life without any loss of awareness.

These cases wouldn’t make sense if you just consider the sheer number of neurons to be important for the creation of conscious experience. In line with Tononi’s theory, however, the cerebellum’s processing mostly happens locally rather than exchanging and integrating signals, meaning it would have a minimum role in awareness.

Measures of the brain’s responses to the TMS also seem to predict the consciousness of patients in a non-communicative and vegetative state – a finding with potentially profound clinical applications.

The integrated information theory could help predict whether computers will ever become conscious (Credit: Emmanuel Lafont)

Great claims require great evidence, of course – and few scientific questions are more profound than the mystery of consciousness.

Tononi’s methods so far only offer a very crude “proxy” of the brain’s information integration – and to really prove his theory’s worth, more sophisticated tools will be required that can precisely measure processing in any kind of brain.

Daniel Toker, a neuroscientist at the University of California Berkeley, says the idea that information integration is necessary for consciousness is very “intuitive” to other scientists, but much more evidence is required. “The broader perspective in the field is that it is an interesting idea, but pretty much completely untested,” he says.

It all comes down to mathematics. Using previous techniques, the time taken to measure information integration across a network increases “super exponentially” with the number of nodes you are considering – meaning that, even with the best technology, the computation could last longer than the lifespan of the universe. But Toker has recently proposed an ingenious shortcut for these calculations that may bring that down to a couple of minutes, which he has tested with measurements from a couple of macaques. This could be one first step to putting the theory on a much firmer experimental footing. “We’re really in the early stages of all this,” says Toker.

Only then can we begin to answer the really big questions – such as comparing the consciousness of different types of brain. Even if Tononi’s theory doesn’t prove to be true, however, Toker thinks it’s helped to push other neuroscientists to think more mathematically about the question of consciousness – which could inspire future theories.

And should information integration theory be right, it would be truly game changing – with implications far beyond neuroscience and medicine. Proof of consciousness in a creature, such as a lobster, could transform the fight for animal rights, for instance.

It would also answer some long-standing questions about artificial intelligence. Tononi argues that the basic architecture of the computers we have today – made from networks of transistors – preclude the necessary level of information integration that is necessary for consciousness. So even if they can be programmed to behave like a human, they would never have our rich internal life.

“There is a sense, according to some, that sooner rather than later computers may be cognitively as good as we are – not just in some tasks, such as playing Go, chess, or recognising faces, or driving cars, but in everything,” says Tononi. “But if integrated information theory is correct, computers could behave exactly like you and me – indeed you might [even] be able to have a conversation with them that is as rewarding, or more rewarding, than with you or me – and yet there would literally be nobody there.” Again, it comes down to that question of whether intelligent behaviour has to arise from consciousness – and Tononi’s theory would suggest it’s not.

Although the concept of group consciousness may seem like a stretch, Tononi’s theory might help us to understand how large bodies of people sometimes begin to think, feel, remember, decide, and react as one entity

He emphasises this is not just a question of computational power, or the kind of software that is used. “The physical architecture is always more or less the same, and that is always not at all conducive to consciousness.” So thankfully, the kind of moral dilemmas seen in series like Humans and Westworld may never become a reality.

It could even help us understand the ways we interact with each other. Thomas Malone, director of the Massachusetts Institute of Technology's Center for Collective Intelligence and author of the book Superminds, has recently applied the theory to teams of people – in the laboratory, and in real-world, including the editors of Wikipedia entries. He has shown that the estimates of the integrated information shared by the team members could predict group performance on the various tasks. Although the concept of “group consciousness” may seem like a stretch, he thinks that Tononi’s theory might help us to understand how large bodies of people sometimes begin to think, feel, remember, decide, and react as one entity.

He cautions this is still very much speculation: we first need to be sure that integrated information is a sign of consciousness in the individual. “But I do think it’s very intriguing to consider what this might mean for the possibility of groups to be conscious.”

For now, we still can’t be certain if a lobster, computer or even a society is conscious or not, but in the future, Tononi’s theory may help us to understand ‘minds’ that are very alien to our own.

BBC

More about: consciousness